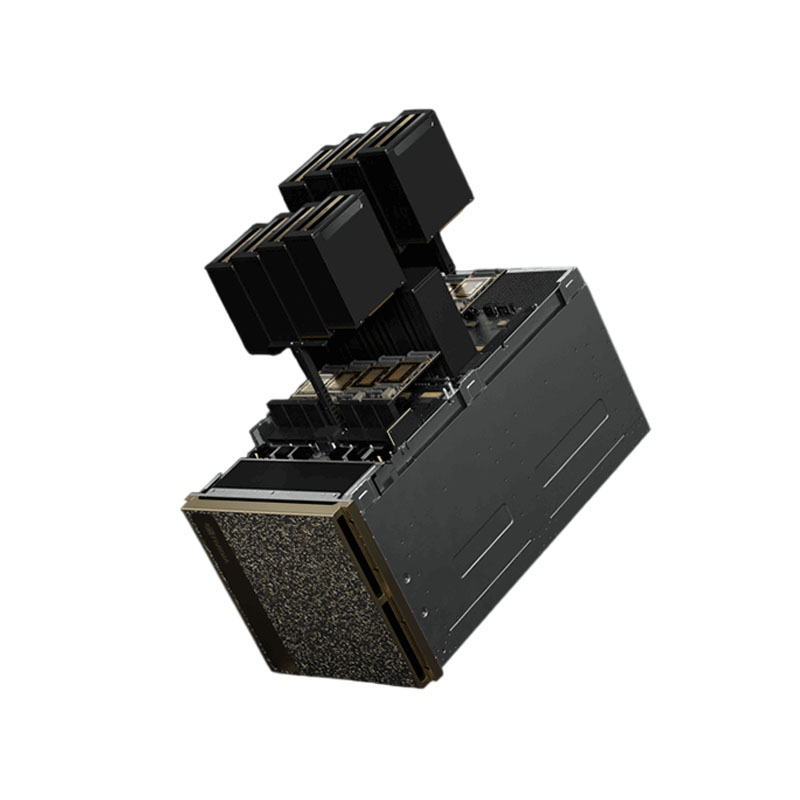

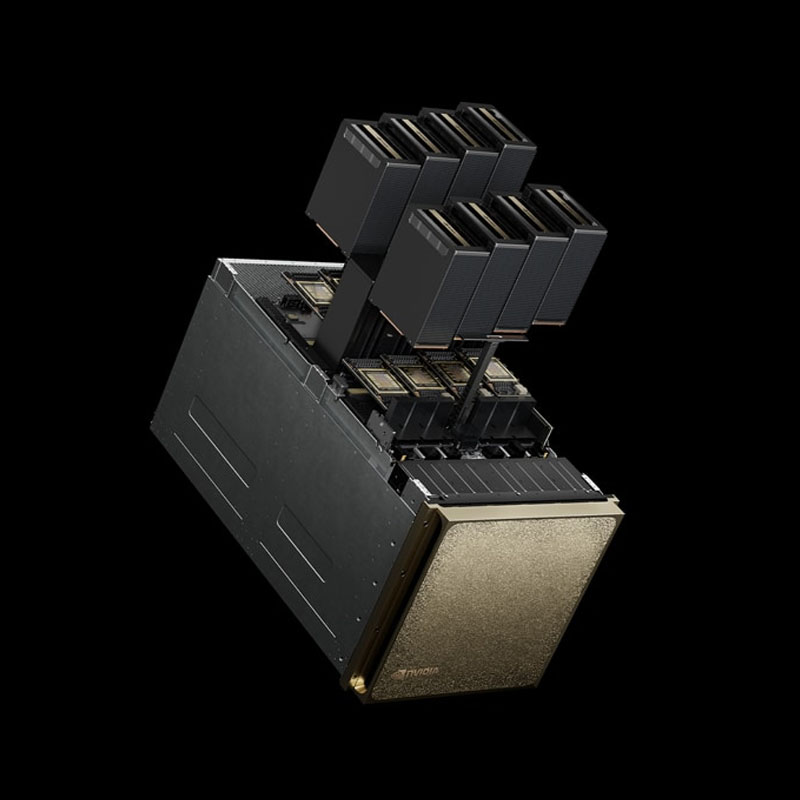

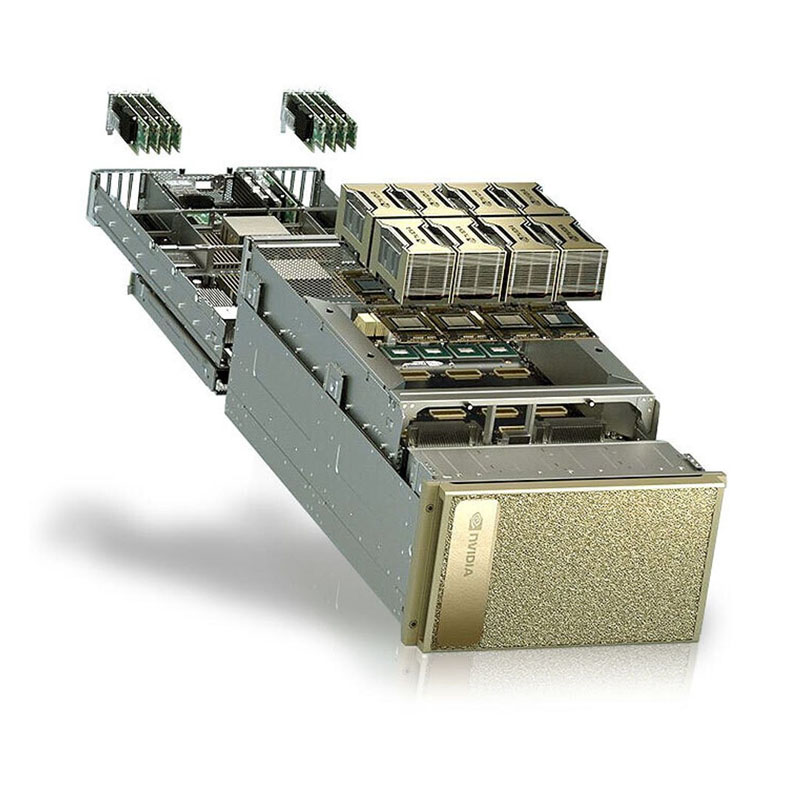

The NVIDIA DGX B200 System is a next-generation enterprise AI supercomputer and a core building block for the AI factory—purpose-built to accelerate Generative AI, LLM training/fine-tuning, and high-throughput inference. It integrates 8× NVIDIA Blackwell (B200) GPUs with a high-speed 5th-gen NVLink / NVSwitch fabric and a balanced CPU, memory, storage, and networking design—so teams can move from development to production faster on a unified platform.

Key Highlights

- 8× NVIDIA B200 (Blackwell) GPUs delivering 1,440GB total GPU memory with 64 TB/s HBM3e bandwidth for large models and long-context workloads.

- 5th-gen NVLink + NVSwitch with 14.4 TB/s aggregate GPU-to-GPU bandwidth for fast all-to-all multi-GPU scaling inside the system.

- Blackwell Tensor Core performance (as listed by NVIDIA) up to 144 PFLOPS FP4 and 72 PFLOPS FP8 for transformer-heavy GenAI pipelines.

- Dual Intel® Xeon® Platinum 8570 CPUs (112 cores total) for strong host compute and I/O throughput.

- 2TB system memory (configurable to 4TB) for large datasets and demanding end-to-end training stacks.

- High-speed networking via ConnectX-7 (8× single-port through 4× OSFP) for scale-out cluster deployments.

- Enterprise storage design with OS mirrored NVMe (RAID1) plus high-speed internal NVMe cache (per DGX B200 documentation).

- Data-center ready power profile (NVIDIA lists ~14.3 kW max).