The NVIDIA DGX A100 System is an enterprise AI supercomputer engineered for AI training, AI inference, and HPC at scale. Built around 8× NVIDIA A100 Tensor Core GPUs connected with high-speed NVLink/NVSwitch, it delivers massive multi-GPU performance in a single, fully integrated platform—ideal for deep learning, large-scale analytics, and scientific computing workloads that demand fast time-to-results.

Key Highlights

- 8× NVIDIA A100 Tensor Core GPUs for high-throughput training and inference across modern AI workloads

- NVLink / NVSwitch fabric for ultra-fast GPU-to-GPU communication and efficient multi-GPU scaling inside the system

- Optimized for AI + HPC: deep learning training, inference serving, simulation, and large data analytics

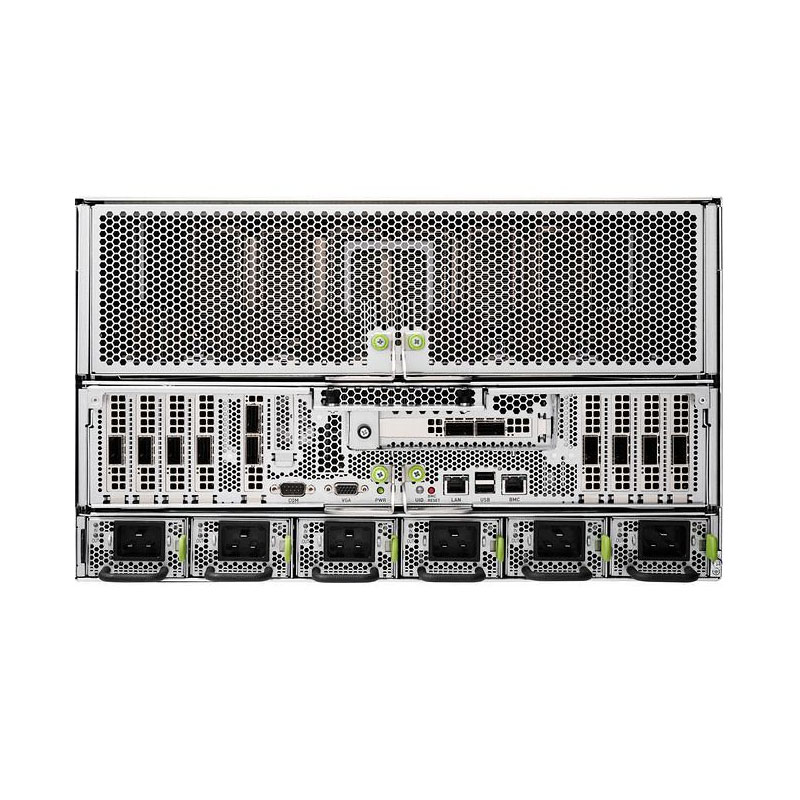

- Data-center ready integration: compute, networking, and high-speed storage in a single validated system design

- DGX software stack support for accelerated deployment and management (drivers, libraries, enterprise tooling)

- Scales to DGX clusters / SuperPOD architectures for multi-node expansion when you need more capacity