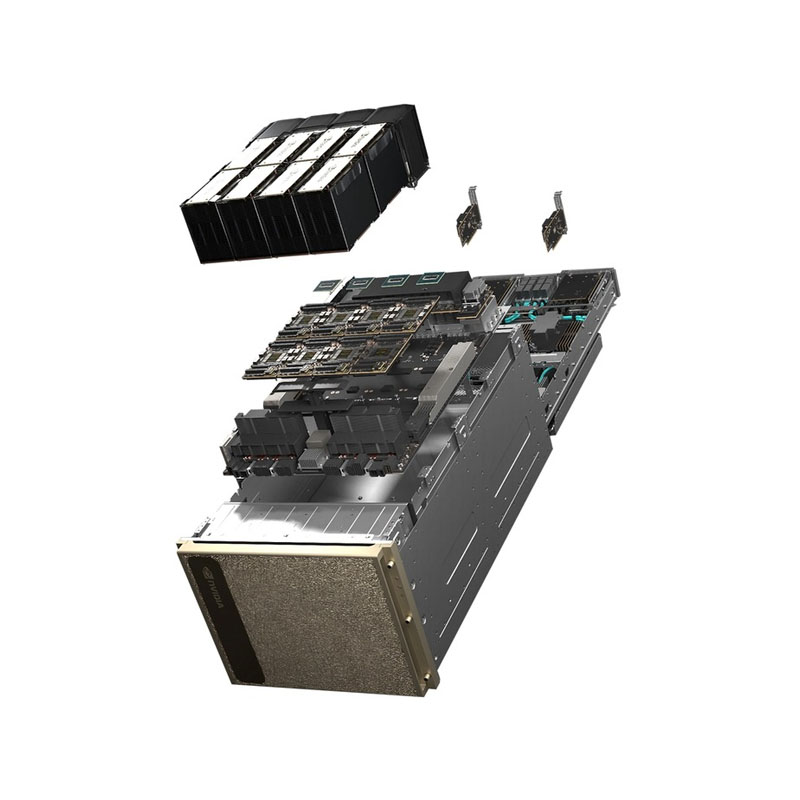

The NVIDIA DGX H200 System is an enterprise AI supercomputer built to accelerate Generative AI, LLM training/inference, and HPC at scale. It integrates 8× NVIDIA H200 Tensor Core GPUs with a high-speed NVLink/NVSwitch fabric, delivering massive GPU memory capacity and fast GPU-to-GPU communication—ideal for training and serving large models with higher throughput and better efficiency in a single, fully optimized platform.

Key Highlights

- 8× NVIDIA H200 Tensor Core GPUs with 141GB each ( 1,128GB total GPU memory ) for large models and long-context workloads

- Up to 32 petaFLOPS FP8 AI performance for high-throughput transformer workloads

- 4× NVIDIA NVSwitch for high-bandwidth multi-GPU scaling inside the system

- Dual Intel Xeon Platinum 8480C CPUs (112 cores total) + 2TB DDR5 system memory for strong host compute and data pipelines

- High-speed networking with NVIDIA ConnectX-7 (up to 400Gb/s InfiniBand/Ethernet) for fast scale-out to DGX clusters/SuperPOD

- Enterprise NVMe storage (OS mirrored NVMe + high-speed U.2 NVMe data cache in common configs)

- System power up to ~10.2kW max (data-center ready)